Call Us Today

Call Us Today

We won a verdict for a single plaintiff in an action against a multi-national pharmaceutical company.

We won a jury verdict for a client who suffered head trauma and permanent scarring in an ATV rollover case in South Texas.

Won a settlement against a Fortune 500 pipeline company for burn victims of a plant explosion in South Texas.

Won settlement for family who lost loved one in trucking crash.

Families in Texas and across the United States are coming forward after learning that children are being targeted on online platforms such as Roblox, Discord, Snapchat, and Meta. These companies are being named in lawsuits for allowing predators to exploit minors, causing devastating harm and financial losses. When a Roblox lawsuit or related claim arises, it is often part of a larger mass tort action involving hundreds of victims. Survivors and families in Texas have powerful legal options, and Kherkher Garcia LLP is here to stand beside them.

Online platforms like Roblox and Discord are more than just entertainment spaces; for many children, they function as daily social hubs. But when companies fail to protect young users, the consequences can be devastating. What begins as harmless online interaction can lead to grooming and long-term trauma. As a result, lawsuits are emerging across the country claiming that Roblox, Discord, and similar platforms are failing to implement basic protections.

These Roblox lawsuits—whether filed in Houston, Dallas, or other jurisdictions—are not just isolated cases. They involve children and teenagers who were victimized online, as well as parents who now face the lasting emotional and financial consequences. Many actions are pursued as mass tort cases in federal court, sometimes through Multidistrict Litigation (MDL). This approach gives families strength in:

Parents are pursuing these cases to secure justice for their children, obtain compensation for therapy and emotional harm, and pressure technology companies into creating safer platforms.

Kherkher Garcia specializes in Roblox & Discord Sexual Exploitation, Grooming & Abuse claims and will pursue the maximum compensation you deserve. We have over 25 years of experience representing sexual assault victims.

If you ever come across Child Sexual Abuse Material (CSAM) on platforms like Roblox or Discord, it is crucial to handle it correctly. CSAM refers to any visual depiction of a minor engaged in sexually explicit conduct. Possessing, sharing, or distributing it, even to report it, is a serious crime.Do not delete, download, or share the material with anyone, including an attorney or law firm. Instead, take note of what you found, such as usernames, links, or screenshots that do not contain illegal content. It is okay to immediately contact law enforcement with that information. However, you should not send explicit material to law enforcement or anyone.

The appropriate authorities, such as your local police department or the National Center for Missing & Exploited Children (NCMEC), are equipped to investigate safely and legally. Your report could help protect a child and stop further abuse. Always let trained investigators handle CSAM. Never attempt to manage or share it yourself.

Roblox is a global online gaming platform where children play, build, and socialize in virtual environments. The company reports tens of millions of daily active users worldwide, and industry research indicates a large share of users are under 13. While marketed as a creative, safe space, the same social features are being misused by offenders who use in-game chat, private servers, and Robux to approach minors under false pretenses.

The concern extends beyond Roblox itself. Discord, Snapchat, and even Meta-owned apps such as Instagram and Messenger are frequently used in connection with Roblox grooming incidents. Offenders may begin contact on Roblox, but then encourage children to migrate to other platforms where monitoring is weaker.

Our trial lawyers will go the distance against corporations and insurance companies to win the maximum compensation in your case.

We stand ready to fight for you against the injustices caused by negligent actions throughout the state of Texas and across the Nation.

Discord is a widely used communication app for gamers, with group servers, private chats, and voice/video channels. Those same features can be misused to contact and isolate minors. Allegations against Discord focus on grooming, sexual exploitation, and the sharing of abusive material in poorly monitored spaces. Lawsuits contend that Discord ignores clear warning signs, allows predators to thrive in private channels, and fails to adopt stronger protective measures.

Under Sec. 15.032 of the Texas Penal Code, it is a crime to persuade, entice, or coerce, or attempt to do so, a child under 18 into conduct that could lead to sexual exploitation. This statute reflects how seriously Texas treats grooming behaviors, which often begin online through casual conversations and escalate into attempts to control or manipulate minors. On platforms like Roblox, Discord, Snapchat, and Meta-owned apps, offenders exploit direct messaging, private servers, disappearing messages, and hidden chats to initiate and sustain this manipulation.

Predators often begin by making seemingly innocent contact in public in-game chats, using compliments, shared interests, or small gifts to establish trust. Once initial contact is made, they frequently encourage children to move into private servers or direct messages, where monitoring is far more limited. From there, many offenders shift conversations to third-party platforms such as Discord, Snapchat, or encrypted apps, making oversight nearly impossible. This pattern allows abusers to isolate minors, conceal their actions, and escalate grooming or exploitation without detection.

A common tactic in online grooming cases is convincing a child to leave the platform where contact began and continue the conversation elsewhere. Predators may suggest moving from Roblox into Discord servers, where voice chats and private channels are harder to monitor, or to Snapchat, where disappearing messages make evidence difficult to preserve. Others use apps like Instagram or Messenger, exploiting features such as private DMs and video calls. This shift reduces oversight, isolates the victim, and increases the risk of exploitation.

No Fees Unless We Win

Many complaints allege that Roblox and Discord create conditions that allow predators to operate despite stated rules. Plaintiffs allege the platforms rely heavily on automated filters that miss slang, coded language, and image-based grooming, while human review is too limited to mitigate risk at scale. Reporting tools and blocking features, they claim, are inconsistently enforced or easily evaded in private servers and DMs. These allegations form a core theory of liability in each Roblox lawsuit, asserting foreseeable harms were not reasonably prevented through available safeguards.

Plaintiffs further contend that both companies fail to reliably verify a user’s age before granting access to open chats, voice channels, or payment features. Because self-reported birthdays and simple prompts are easy to bypass, adults can pose as teens and contact children undetected. Allegations also target insufficient parental controls and weak enforcement of minimum-age policies across linked accounts. In a Roblox lawsuit, inadequate age-gating is framed as a foreseeable defect that enables grooming, off-platform coercion, and sexual exploitation.

Another set of claims asserts that growth and monetization are prioritized over robust safety engineering. Plaintiffs point to design choices that drive engagement—persistent notifications, frictionless friend requests, and in-game purchases—without commensurate investment in trust-and-safety staffing, testing, or escalation protocols. They allege the platforms benefit from increased time-on-site and spending while foreseeable risks to minors persist. This profit-over-safety theory seeks punitive exposure where evidence shows conscious indifference to known dangers, and demands corporate reforms alongside compensation for affected children and families.

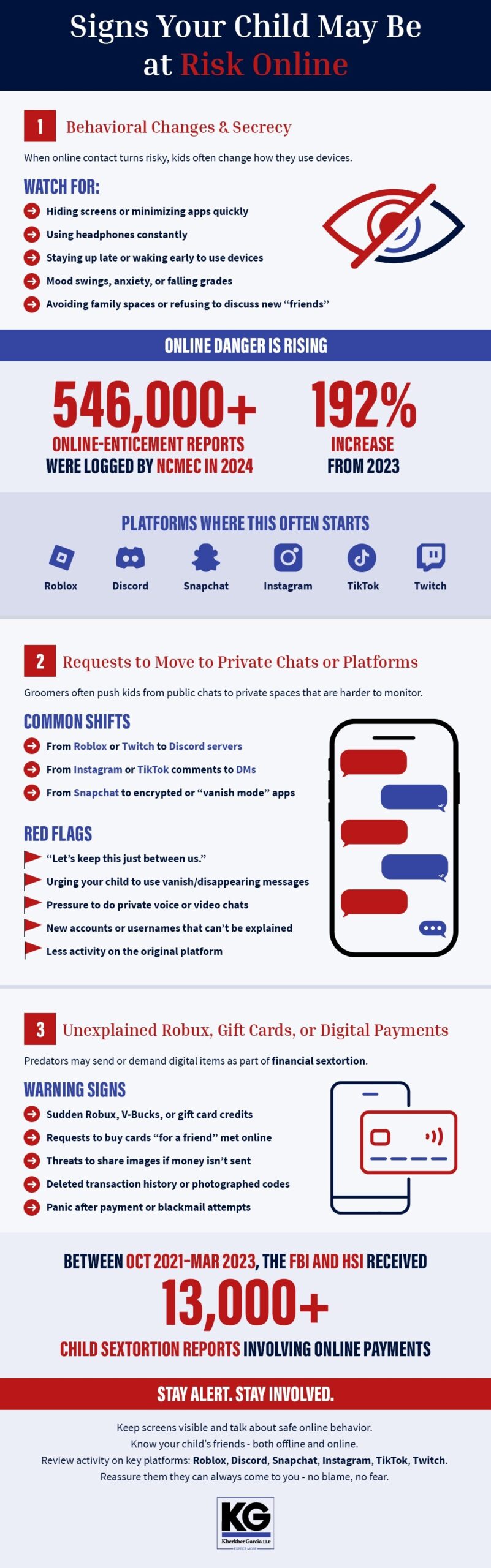

When online contact turns risky, many children start hiding screens, minimizing apps, or avoiding shared spaces at home. Watch for sudden mood swings, sleep problems, slipping grades, or anxiety around devices—especially if these changes follow increased gaming or chatting. The surge in online–enticement reports underscores why vigilance matters. NCMEC logged more than 546,000 online-enticement reports in 2024, a 192% jump over 2023, reflecting a growing threat landscape for families. What to watch for (parents):

A common grooming step is pushing a child from a public space (e.g., game chat) to private servers or DMs, then to apps with disappearing messages. Predators leverage the fact that most teens already use multiple social platforms, making the shift feel “normal.” Recent survey data shows that a majority of U.S. teens use Instagram and Snapchat, illustrating how easily contact can migrate off the original app and out of view. Red flags to consider:

Contact Our Roblox and Discord Lawyers in Houston

Predators may send in-game currency, gift cards, or small payments to build leverage—or make coercive demands tied to images or continued contact. Federal agencies report thousands of cases where threats and demands target minors online, often involving gift cards or digital payments.

Before anything else, prioritize your child’s safety and preserve information. Online grooming and coercive exploitation often move quickly across multiple apps, so fast, calm action helps protect your child and strengthens any future claim. The steps below reflect guidance from child-protection and public-safety authorities and are designed to keep evidence intact, connect you with the right investigators, and support your child’s health and recovery.

Save everything. Stop the conversation, but do not delete accounts, messages, or files. Download or screenshot chats, images (only non-explicit), usernames, user IDs, server names, and profile links. Preserve the original files whenever possible, as they carry timestamps and metadata. As noted above, parents should never download, save, screenshot, or share explicit material involving minors, even to law enforcement or attorneys.

Back up evidence to a secure drive, and keep a simple timeline (dates, platforms used, what was said). Public-safety guidance warns against complying with threats or demands; cutting off contact and preserving evidence are the priority steps.

Families should file a report with the National Center for Missing & Exploited Children’s CyberTipline, the nation’s centralized system for suspected online child exploitation. Trained analysts then route reports to the appropriate agency. In Texas—including Houston and Dallas—families can also work with local police and the Internet Crimes Against Children (ICAC) Task Force network to coordinate digital forensics and victim services. Reporting promptly helps investigators secure accounts, subpoenas, and preservation requests.

Arrange medical care and trauma-informed counseling as soon as possible. Health professionals can document injuries and psychological impact, and provide crisis stabilization and ongoing therapy. National resources offer confidential help 24/7 and referrals for child and family support; this care complements law enforcement action and supports your legal claim with accurate clinical records. For ongoing recovery, agencies emphasize trauma-informed approaches that prioritize safety, trust, and empowerment for children and caregivers.

We evaluate potential claims when a child is groomed or otherwise exploited through Roblox, Discord, Snapchat, or Meta-owned apps and suffers harm. In Texas, a minor’s claim is brought by a parent or legal guardian, while an adult survivor can file in their own name for childhood abuse, subject to Texas’s limitation periods. Parents may also claim the child’s medical and counseling bills paid by the family. In fatal cases, wrongful-death and survival claims may apply. Each Roblox lawsuit is unique, and begins as an individual case. However, cases may be coordinated in mass-tort or MDL proceedings for efficient, consistent pretrial management.

Evidence drives outcomes. We recommend preserving original communications (chats, DMs, voice/video), attachments, and metadata from Roblox, Discord, Snapchat, Instagram, or Messenger. Capture usernames, unique IDs, server names, profile links, and any alternate handles. Keep device backups and platform data exports; avoid altering or “cleaning” devices. Retain Robux purchase histories, gift-card codes, payment-app receipts, and card statements tied to grooming or coercive demands. Save police incident numbers, NCMEC CyberTipline reports, and support tickets or preservation notices. Maintain accurate medical and therapy records, as well as a clear timeline of events. This documentation strengthens any Roblox lawsuit and supports efficient coordination in MDL or mass-tort settings.

Texas law allows survivors to recover both economic and non-economic losses. The categories below reflect what courts may award when the evidence supports each of the elements.

Economic damages include the costs of necessary medical and psychological care for the child (e.g., hospital bills, prescriptions, trauma-focused counseling, psychiatry, and related treatment). In Texas, recovery of medical or health-care expenses is limited to the amounts actually paid or incurred by or on behalf of the claimant.

Texas law allows survivors to recover for non-economic damages, which cover the intangible but very real impact of abuse. Under Tex. Civ. Prac. & Rem. Code §41.001, noneconomic damages may include mental pain, emotional anguish, loss of companionship, disfigurement, physical impairment, harm to reputation, inconvenience, or loss of enjoyment of life. These categories reflect the wide range of suffering a child and their family may endure following exploitation. Furthermore, non-economic damages ensure that compensation accounts for more than just financial losses.

No Fees Unless We Win

Exemplary damages in Texas are available only when the claimant proves—by clear and convincing evidence—that the harm resulted from fraud, malice, or gross negligence, and the jury is unanimous on liability and amount. This remedy is punitive (to punish) rather than compensatory in nature.

Roblox, Discord, and online platform lawsuits do more than seek compensation for a single child—they drive systemic change across online platforms. When families pursue claims, courts can issue consistent rulings and injunctive relief that reshape product design, moderation, and age-assurance practices. The result is broader safety for children in Houston, across Texas, and nationwide.

Texas law grants survivors of childhood sexual abuse an extended time to pursue justice. Under Tex. Civ. Prac. & Rem. Code §16.0045, a lawsuit for personal injury must generally be filed within 30 years from the date the cause of action accrues if the harm results from offenses such as sexual assault of a child, aggravated sexual assault of a child, or continuous sexual abuse of a young child or disabled individual. While this law provides a lengthy window, acting promptly remains essential, as evidence may be lost and witness memories can fade over time.

At Kherkher Garcia, we move quickly on the things that matter most: your child’s safety and the preservation of proof. From day one, we help you lock down accounts, capture original chats and files (with timestamps/metadata), and send preservation letters to Roblox, Discord, Snapchat, and Meta so critical data isn’t lost. With your permission, we coordinate reports to law enforcement and NCMEC/ICAC, and line up certified forensic imaging so devices aren’t altered. We seek court protections that shield a minor’s identity (use of initials, protective orders, sealing where appropriate). We also connect families with trauma-focused care in the Houston area and build a practical plan for school, devices, and ongoing monitoring—without requiring your child to repeatedly retell painful details.

Your case is built on two tracks—individual justice and systemic accountability. We reconstruct the digital trail across platforms, retain child-psych and economic-loss experts, and document therapy, medical care, and non-economic harms recognized under Texas law. We pursue negligent design and safety-failure claims, serve targeted discovery on platform defendants, and use subpoenas to third parties that hold key data. Venue strategy is handled in-house: filing in Texas courts when appropriate or coordinating your Roblox lawsuit within federal mass-tort or MDL proceedings for shared discovery, efficiency, and consistent pretrial rulings. We litigate toward durable reforms and full compensation, and we do it on contingency—no fees unless we recover for your family.

Child exploitation linked to Roblox, Discord, Snapchat, or Meta demands fast action. Call Kherkher Garcia LLP at 713-333-1030 now for a free, confidential consultation. Early steps can strengthen a Roblox lawsuit. We will preserve chats and files, request platform data, and map your legal strategy quickly and efficiently. No upfront fees. Serving families in Houston and across Texas.

Steve Kherkher is passionate about serving his clients. He has dedicated his life to championing the rights of those who have experienced catastrophic injury due to negligence.

Steve Kherkher, along with Trial Lawyer Jesus Garcia, founded Kherkher Garcia, and under their leadership, the firm achieved unprecedented success within its first three years.

With a career spanning over 35 years, Steve’s tireless pursuit of justice for his clients has earned him national recognition and numerous accolades as an exemplary trial attorney.

This page has been written, edited, and reviewed by a team of legal writers following our comprehensive editorial guidelines. This page was approved by attorneys Steve Kherkher and Jesus Garcia Jr., who have more than 50 years of combined legal experience championing the rights of those who have experienced catastrophic injury due to negligence.

Connect with a Kherkher Garcia trial lawyer today to pursue maximum compensation for your injury.